AI coding context tools compared

How coding agents, context MCPs, code host MCPs, and Sourcegraph handle codebase understanding.

A comprehensive comparison of how AI coding tools build, maintain, and scope their understanding of a codebase. Covers cross-repository search, code navigation, SCIP precise-enabled indexing, coverage guarantees, and persistent code intelligence.

Five approaches to codebase context

Every AI coding tool needs context about your codebase to be useful. The tools differ in how they get it, how long they keep it, and how much of the codebase they can reason about at once.

Detailed capability comparison

Four dimensions that determine how effective each tool is for engineering tasks at scale. Tools are ordered by depth of capability.

| # | Tool | Scope & Coverage | Search & Navigation | Collaboration | Remediation |

|---|---|---|---|---|---|

| 1 | Sourcegraph | Org-wide across GitHub, GitLab, Bitbucket, Gerrit, Perforce, Azure DevOps. All branches and revisions. Exhaustive coverage: every instance across all repos. Code Monitors alert on new matches. | Structural, semantic, and regex search. Symbol-aware with exact match guarantees. Precise go-to-definition and find-all-references across repos via SCIP. Commit search, diff search, blame, and full change history. | Single shared infrastructure for all developers and AI agents. MCP server with search, code nav, Deep Search, file browsing, commit/diff search. | Batch Changes across thousands of repos. Code Insights tracks migration progress over time. |

| 2 | Augment Code | Multi-repo via remote MCP (indexed GitHub orgs). High recall via semantic search across indexed repos. | Semantic search with pattern matching. Cross-repo dependency awareness. Commit history indexed and searchable (Context Lineage). | Shared via remote MCP server. Available as MCP server (local and remote). | Agent-driven changes within indexed scope. |

| 3 | Cursor | Single repo indexed; limited multi-repo via PR fetch. Best-effort coverage depends on embedding recall. | Semantic similarity plus text hybrid search. Limited cross-repo navigation within indexed project. Merged PR summaries indexed. | Per-user index. Supports external MCP servers. | Multi-file edits within a single project. |

| 4 | GitHub MCP | Multi-repo via GitHub API. Scoped to GitHub API search limits. | GitHub code search (text with language and path filters). File browsing across repos via API. Commits and PRs accessible via API. | Shared via GitHub (no persistent model). Available as MCP server. | PR creation via API. No cross-repo orchestration. |

| 5 | Coding Agents | Single local repository. Best-effort coverage depends on what agent explores. | Text and regex (grep, ripgrep). No cross-repo navigation. Manual git log/diff via shell. | Per-session, per-user only. N/A (agent is the host). | Manual. Single repo per session. |

Sourcegraph

Augment Code

Cursor

GitHub MCP

Coding Agents

What makes Sourcegraph's code intelligence precise: SCIP indexing

.svg)

SCIP (Source Code Intelligence Protocol) is a language-agnostic protocol for indexing source code. SCIP powers the precise code navigation features that make Sourcegraph's coverage guarantees possible: go-to-definition, find-all-references, and cross-repository dependency resolution.

Unlike embeddings-based search (which returns approximate results based on vector similarity) or text search (which matches strings without understanding code structure), SCIP indexing produces structurally precise results. When you search for all references to a function, SCIP returns every reference, not a ranked list of likely matches.

SCIP indexers currently support Go, Java, TypeScript, Python, Ruby, C++, C#, and additional languages. Indexing runs incrementally, updating as code changes rather than requiring full re-indexing.

SCIP moves to open governance (March 2026)

Sourcegraph announced that SCIP is transitioning to an independent project with open governance. A Core Steering Committee has been established with engineers from Uber, Meta, and Sourcegraph to guide SCIP's future development.

The move to open governance signals industry-wide commitment to making precise code intelligence an open standard, not a proprietary feature tied to a single vendor.

Read the full announcement →The key distinction: relevance vs. coverage

Relevance

Most AI coding tools answer: what code is most relevant to this task? They optimize for relevance, using semantic similarity, embeddings, or agentic exploration to surface the most useful context.

Coverage

Many engineering tasks require a different guarantee: what exists in the system that must not be missed? A migration requires identifying every call site. A security audit requires finding all instances of a vulnerable pattern across every repository.

Sourcegraph is the only tool in this comparison that provides exhaustive coverage guarantees, because its code graph is built on precise SCIP indexing rather than approximate similarity matching.

When to use each approach

Coding Agents

Best for single-repo tasks, exploratory coding, quick fixes, and prototyping. Works well when scope is contained and the agent can discover what it needs through file exploration.

Cursor

Best for daily development within a single project. Codebase indexing provides relevant suggestions and the agentic mode handles multi-file edits effectively within the indexed scope.

Augment Code

Best for teams wanting better context quality across multiple repositories. The semantic index improves agent accuracy and reduces hallucinations on complex tasks.

GitHub MCP Server

Best for workflow automation, issue management, and PR interaction. Provides agents with access to GitHub data but does not provide deep structural understanding of code.

Sourcegraph

Best for enterprise-scale engineering work that requires completeness: cross-repository migrations, security audits, large-scale refactoring, finding every reference to a deprecated API, and organization-wide code insights.

How Sourcegraph differs from coding agents and context engines

Coding agents

Agents like Claude Code and Codex are powerful at exploring and reasoning about code within a session. But they rebuild their understanding from scratch on every task, and their view is limited to what they happen to search for.

Context engines

Context engines like Augment Code improve on this by maintaining a persistent semantic index. This gives agents a better starting context and reduces wasted exploration. But semantic search still returns approximate results based on embedding similarity.

Sourcegraph

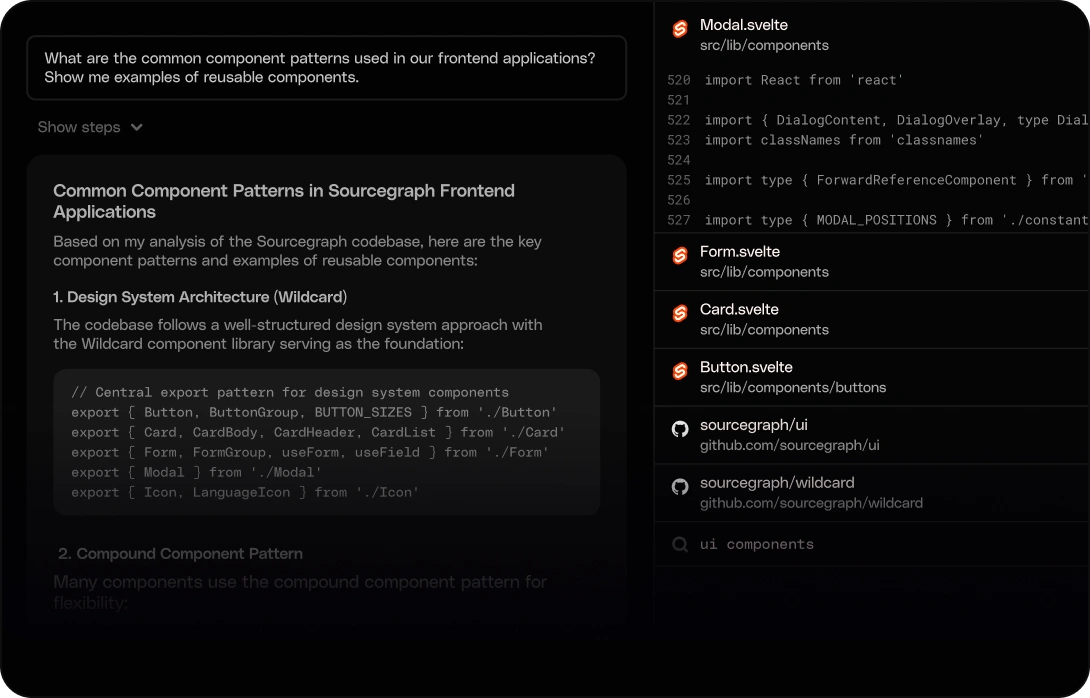

Sourcegraph maintains a continuously updated code graph built on SCIP precise-enabled indexing. Queries return exact, structurally-aware results across every repository in the organization. Deep Search extends this further by layering agentic, natural language exploration on top of the precise code graph.

Sourcegraph's MCP server exposes this intelligence to any MCP-compatible agent. Agents that previously relied on grep and file exploration can now query a system that already understands how the codebase is organized.

Frequently asked questions

Give your agents the context they need

Connect Sourcegraph to your AI coding tools via MCP and unlock precise, cross-repository code intelligence for every developer and agent in your organization.