Deep Search has always been good at finding and explaining code. It can now also count, rank, and aggregate it without blowing up the context window.

A new sandboxed scripting tool lets the agent run programmatic aggregations across many searches in a single turn. Questions that don't fit into a single query, e.g. top-N rankings, cross-search joins, distributions across many files, can now be answered end-to-end, with every underlying search still cited so you can verify how the answer was assembled.

With the new tool, Deep Search offers Code Search's depth and exhaustivity combined with agentic reasoning capabilities for computing complex results behind a natural language prompt. When a question calls for exhaustive research, the agent fans out, processes every match across many precise queries, and aggregates them into the answer.

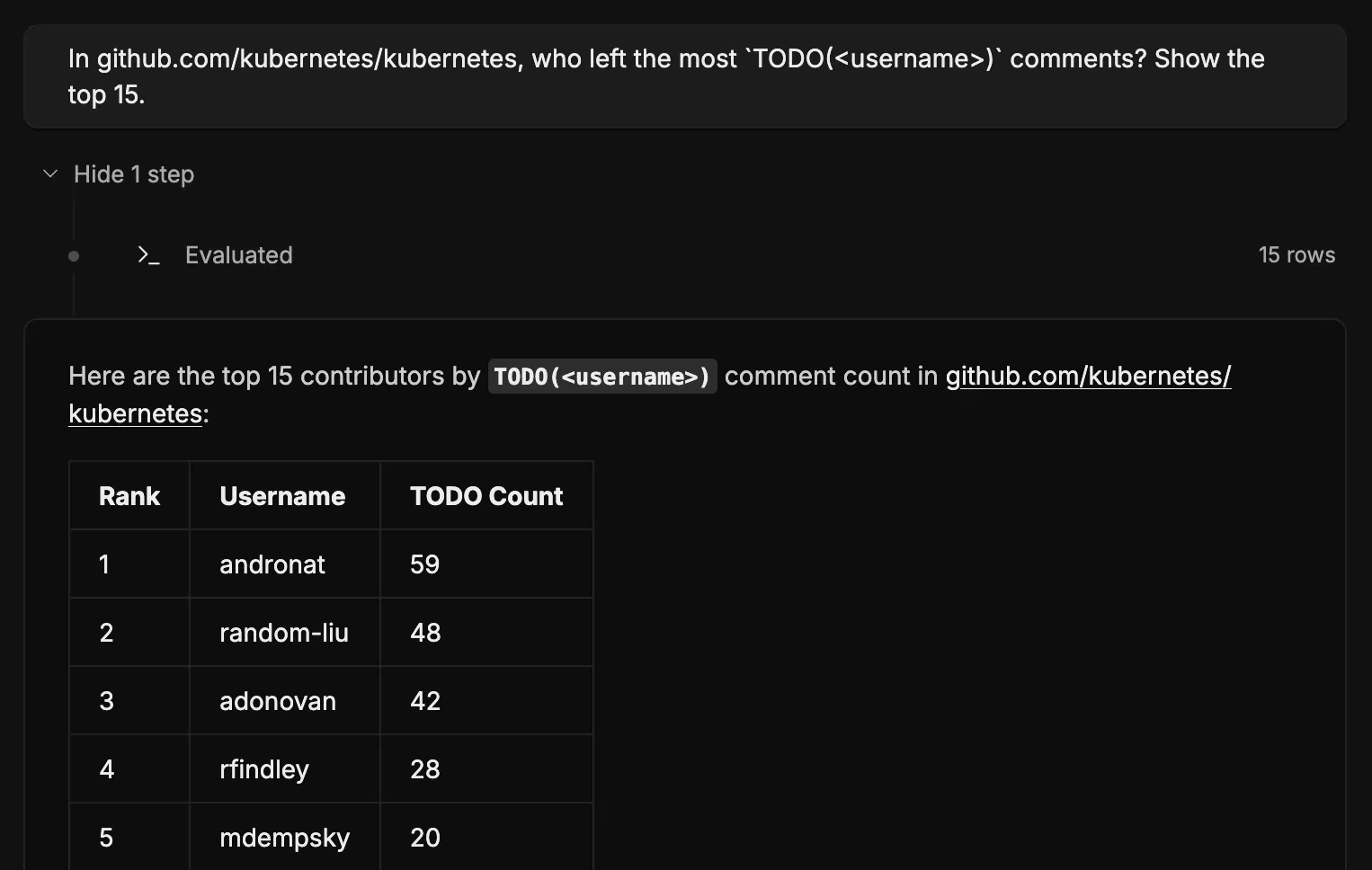

For example, ask Deep Search: "In github.com/kubernetes/kubernetes, who left the most TODO(<username>) comments? Show the top 15." Deep Search iterates through every match, groups by author, and returns a ranked table, instead of just the first page of hits.

The same shape unlocks questions that previously meant exporting search results and scripting against them yourself:

- "How far along is the

interface{}→anymigration in golang/go? Break it down by top-level package." - "In microsoft/vscode, which files have been touched in the most fix/bugfix commits in the last 6 months, and who's been fixing them?"

- "List the top 20 internal Go packages in sourcegraph/sourcegraph by number of distinct other internal packages that import them."

- "Which files were most frequently modified in the past year and who made changes to them?"

- "What's the total of all resources (cpu + mem) across all deployments on k8s in myorg/infra?"